(the number of current visitors is automatically updated every 4 minutes)

If you want to share information about this web page...

This web-page describes level of significance and how this relates to p-values. These concepts are sometimes mixed up and reading this page will enable you to understand the difference. The page also contains online calculators that will help you.

You will understand this page best if you have read Introduction to statistics and Inferential statistics.

The difference between level of significance (alpha) and the p-value

A low p-value indicates that it is highly unlikely we would see our data if the effect or correlation we are looking for were truly zero. In other words, a low p-value allows us to reject the null hypothesis in favor of the alternative hypothesis. But exactly how low must the p-value be for us to take this step? This threshold should be determined from case to case and is known as the level of significance, or alpha.

We use inferential statistics to calculate a p-value. The next step is to compare this calculated p-value against our predetermined level of significance (alpha). If the p-value is below alpha, we reject the null hypothesis and consider the alternative hypothesis to be the most plausible. Conversely, if the p-value is higher than alpha, we cannot reject the null hypothesis; our results do not provide enough evidence to contradict it..

By tradition, alpha is most often set to ≤0.05. Therefore, a p-value of 0.045 would be classified as statistically significant, while a p-value of 0.055 would not. However, it is crucial to remember that this ≤0.05 threshold is not black and white. In reality, findings of p=0.045 and p=0.055 are statistically very similar. Because of this, exact p-values should always be reported, rather than simply stating whether a finding was “significant” or not.

In summary: The level of significance (alpha) is a fixed limit determined by the researcher, usually in advance. It does not depend on our observations and is not calculated; rather, it is a conscious decision based on the safety margin needed to avoid making a Type I error (a false positive). The p-value, on the other hand, is calculated directly from—and depends entirely on—our observed data.

The level of significance and pure chance

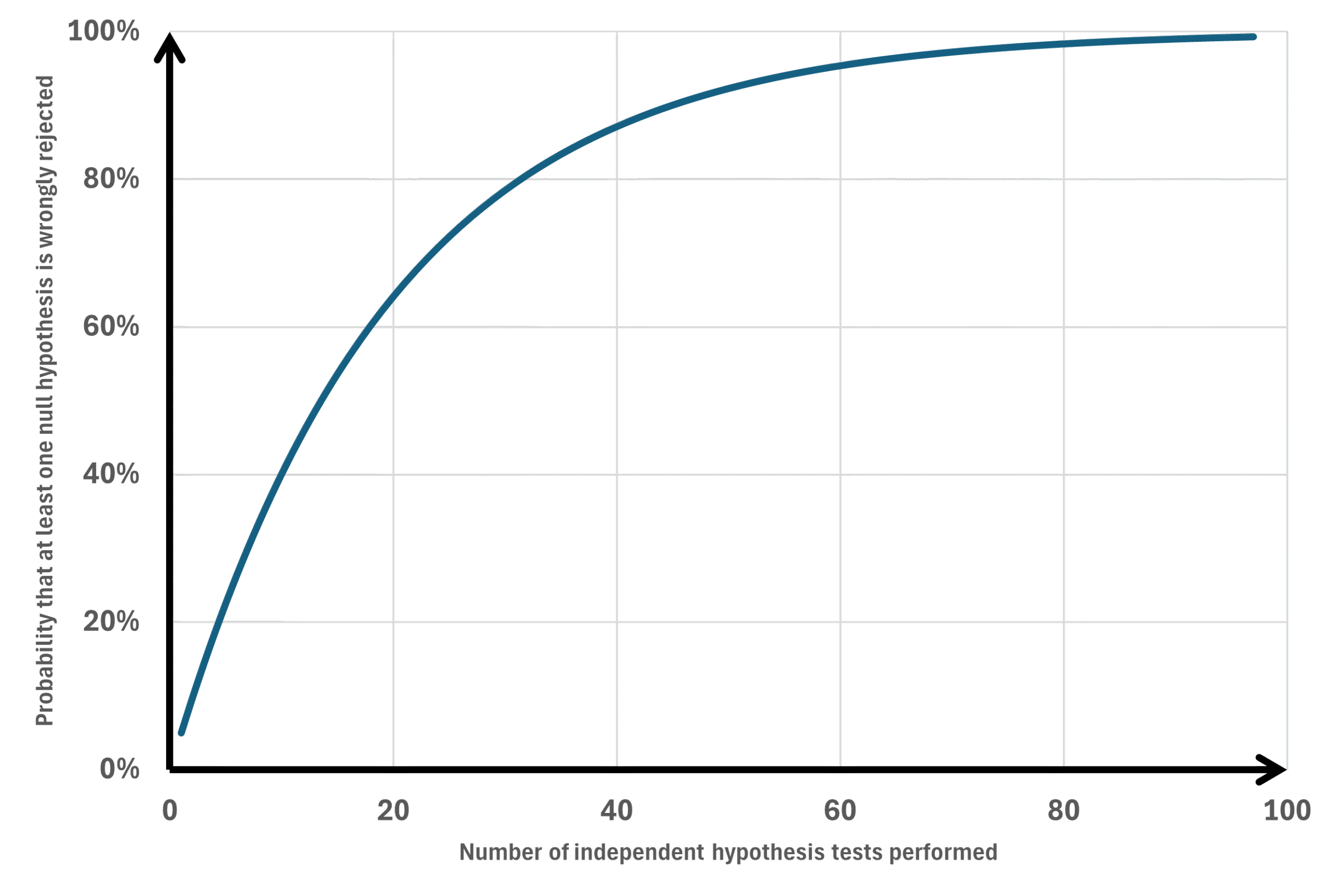

Assume we want to know which variables differ between two groups, those who have experienced an illness compared to those who have not (or it could be those who say yes to a question compared to those who say no). Also assume that we want to investigate 50 different variables, some being categorical while others are continuous. Using chi-square or t-test to compare the two groups would result in 50 p-values, some below and some above 0.05. However, a p-value ≤0.05 can occur by pure chance without representing a real difference between groups. The probability to wrongly reject the null hypothesis (believe that a low p-value represents something else than pure chance) increases the more tests we do (Figure 1).

Deciding the level of significance

To compensate for the possibility of getting statistical significance by pure chance when we do multiple testing we need to lower the limit where we consider a statistical finding as significant as soon as we present more than one p-value. There are different methods to do this.

Family-Wise Error Rate (FWER) and False Discovery Rate (FDR)

Family-Wise Error Rate (FWER) is the probability of making at least one Type I error — that is, at least one false positive — across a family of statistical tests. In simpler terms: If you run several hypothesis tests, FWER is the chance that one or more of them incorrectly appears statistically significant just by chance (Figure 1). The formula is 1-(1-alpha)^m (m=number of statistical tests). For example we make 20 statistical tests the chance of making at least one type I error is:

In this example it would be 64% chance of having at least one statistical test being false positive. FWER is concerned with controlling the probability of any such false discovery in the whole set of tests. FWER is stricter than the False Discovery Rate (FDR):

- FWER controls the chance of making any false positive.

- FDR controls the expected proportion of false positives among the statistically significant findings.

Overview of “universal adjusters” of the level of significance

| Method | Description | Advantage | Disadvantage |

|---|---|---|---|

| The Bonferroni method | Controls the Family-Wise Error Rate (FWER). Keeps the chance of making even one false positive at exactly 5% (or your chosen level of significance). Never use this method (better to use one of the below recommended methods). | Very simple to understand. Does not require that each test is independent. | “Very conservative” making it hard to find statistical significance if you calculate many p-values. |

| The Dunn-Šidák correction | Controls the Family-Wise Error Rate (FWER). Keeps the chance of making even one false positive at exactly 5% (or your chosen level of significance). Never use this method (better to use one of the below recommended methods). | Slightly less conservative than Bonferroni’s method. However, the difference is very small and this method is, like Bonferroni’s method likely to miss relevant findings. | Less easy to understand. Also requires that every single one of your 20 tests is completely independent of the others (which is often not the case in reality). |

| The Holm-Bonferroni method | Controls the Family-Wise Error Rate (FWER). Keeps the chance of making even one false positive at exactly 5% (or your chosen level of significance). Suitable for confirmatory research. | Less conservative compared to Bonferroni’s method meaning that the chance of finding a true statistical significance is higher than if you use Bonferroni’s method. Does not require that each test is independent. | It is still relatively conservative. If you are doing hundreds of tests, it will still wipe out a lot of potentially valid discoveries. |

| The Benjamini-Hochberg procedure | Instead of controlling the chance of making any false positive, this method controls the False Discovery Rate (FDR) meaning it accepts a small, known percentage of false positives in exchange for a much higher chance of discovering true significant differences. Suitable for exploratory research. | Massively more statistical power than any of the above methods. It is the gold standard for exploratory data analysis where you are screening dozens or hundreds of variables to see what looks promising. You use BH when you want to cast a wide net to find leads for future, more targeted research. This is the best approach when making calculating many p-values (>20?). Does not require that each test is independent. | You will get some false positive p-values. |

The Bonferroni Method

This simple method means that we divide the desired overall level of significance (often 0.05) with the number of p-values calculated. In an example with 50 p-values calculated a Bonferroni adjustment means that only p-values ≤0.001 should be considered as statistically significant which may be difficult to achieve. Hence, Bonferroni’s method is not suitable if you calculate many p-values. The performance of the Holm-Bonferroni method or the he Benjamini-Hochberg procedure is much better so use one of those instead.

The Dunn-Šidák correction

The Dunn-Šidák correction (or simply the Šidák correction) is, as Bonferroni’s method, a historical footnote. The performance of the Holm-Bonferroni method or the he Benjamini-Hochberg procedure is much better so use one of those instead.

The Holm-Bonferroni Method

Controls the Family-Wise Error Rate (FWER) just like the standard Bonferroni, meaning it keeps the chance of making even one false positive at exactly 5% (or your chosen alpha). Instead of treating every test the same, Holm-Bonferroni uses a “step-down” approach. It is essentially a smarter, less punishing version of the standard Bonferroni correction. The procedure is step by step:

- Rank your p-values: Order all your p-values from smallest (most significant) to largest (least significant).

- Apply a sliding threshold: Instead of dividing your alpha (foe example 0.05) by the total number of tests done for every single test, the threshold changes depending on the rank of the p-value. The formula for the adjusted threshold for the i-th ranked p-value is:

Threshold = alpha / (m – i + 1)

(Where alpha is 0.05, m is the total number of p-values calculated, and i is the rank of the p-value). - The Step-Down: For your smallest p-value, the threshold is exactly the same as the one calculated using the standard Bonferroni method. If your p-value is equal to or below this, it is significant. For your second smallest p-value, the threshold becomes slightly easier to achieve compared to the one calculated by the Bonferroni method. You then continue down the list. With each step, the threshold becomes larger (easier to achieve) compared to the threshold calculated by the Bonferroni method. As soon as you encounter a p-value that is larger than its calculated threshold, you stop. That variable, and all variables following it on the list, are declared statistically non-significant.

In a “Step-Down” procedure like Holm-Bonferroni, the p-values function like a series of gates. To reach the final gate, you must first pass through all the preceding ones. When the calculator encounters the first non-significant value, it closes the door completely. According to the rules of Holm-Bonferroni, that p-value and all subsequent p-values are then declared non-significant—regardless of how small they might be in relation to their own theoretical thresholds. This is the “penalty” inherent in Holm-Bonferroni: if one link in the chain breaks, the rest of the tests fall with it to protect against false positives.

Holm-Bonferroni calculator

The Benjamini-Hochberg Procedure

Controls the False Discovery Rate (FDR). This is a massive shift in statistical philosophy. Instead of trying to guarantee zero false positives, the BH procedure accepts that false positives will happen. Its goal is to control the percentage of your “significant” findings that are actually false. If you set your FDR to ≤0.05, it means: “Of all the variables I declare significant today, I am okay with 5% of them being false positives.” The procedure is step by step:

- Rank your p-values: Order them from smallest on top to the largest at the bottom of the list.

- Calculate a critical value for each p-value: The threshold grows much faster than in the Holm-Bonferroni method. The formula is:

Critical Value = (i/m) * Q

(Where i is the rank, m is total number of p-values calculated, and Q is your chosen False Discovery Rate, usually 0.05). - The Step-Up: You look at your list and find the largest p-value that is smaller than its corresponding critical value. That variable, and every variable ranked smaller than it, is declared statistically significant.

The step-up procedure might be a bit diffficult to grasp so let us look at an example. In the calculator below, keep alpha at 0.05, and enter the following p-values: 0.060, 0.039, 0.035, 0.015, 0.005. This is how each p-value should be ranked and compared with its corresponding critical value:

- Rank 1:

0.005(Critical Value: 1/5 * 0.05 = 0.010) -> Passes (0.005 < 0.010) - Rank 2:

0.015(Critical Value: 2/5 * 0.05 = 0.020) -> Passes (0.015 < 0.020) - Rank 3:

0.035(Critical Value: 3/5 * 0.05 = 0.030) -> Fails (0.035 > 0.030) - Rank 4:

0.039(Critical Value: 4/5 * 0.05 = 0.040) -> Passes (0.039 < 0.040) - Rank 5:

0.060(Critical Value: 5/5 * 0.05 = 0.050) -> Fails (0.060 > 0.050)

Now comes the tricky part. Because Benjamini-Hochberg is a step-up procedure, the calculator is supposed to start at the bottom (Rank 5) and work its way up until it finds a “Pass”. Because Rank 4 passes, everything ranked 1 through 4 must be declared significant even if they fail when compared to their corresponding critical value. Hence, Rank 4 pases since it passed its own critical value while rank 1-3 is declared a pass irrespective of their critical value due to the step-up rule.

Benjamini-Hochberg calculator

Tips on which adjusting method to use

- Do not use the standard Bonferroni correction if your purpose is solely to evaluate multiple p-values. The other methods are always better if your goal is to maximize the number of detected significances and you only care about p-values. However, if you want to (or are required to) report confidence intervals for your data adjusted for the fact that you are calculating multiple confidence intervals, the classic Bonferroni correction remains a highly adequate and justified choice (see below).

- Having a small number of tests (like 5, 10, or 15) usually means you have specific, pre-planned hypotheses. You aren’t “fishing” for data; you are trying to confirm specific theories. In these cases, you want strict protection against false positives, so Holm-Bonferroni (controlling the FWER) is the correct choice. Use Holm-Bonferroni when you are doing confirmatory research. If a false positive would result in changing a clinical guideline, approving a useless drug, or making a definitive claim in a high-impact journal, you must use Holm-Bonferroni to guarantee that your overall risk for getting one or several false positive results is capped at 5%. Even if you have 30 p-values, if the stakes are high, you have to take the power hit and use Holm-Bonferroni.

- Use Benjamini-Hochberg when you are doing exploratory research. If your goal is simply to find “variables of interest” to test again in a future, more targeted study, Benjamini-Hochberg is perfect. You accept a 5% false-discovery rate because a false positive here just means a slight waste of time in the next study, not a catastrophic real-world error. You can use BH even if you only have 12 p-values, as long as your goal is purely exploratory. When you cross into 20, 50, or 100+ p-values, you are usually entering the realm of exploratory research (e.g., screening dozens of genes, biomarkers, or demographic variables). In exploratory research, Benjamini-Hochberg (controlling the FDR) is absolutely the right choice because you want to cast a wide net and don’t want a strict FWER penalty to hide valuable leads.

If you perform 100 tests and use Holm-Bonferroni with alpha = 0.05, the goal is that in 95 out of 100 cases, you will have zero false positives. If, on the other hand, you use Benjamini-Hochberg (FDR) at 5%, you accept that approximately 5% of the results you declare significant are actually false. The difference in stringency is therefore enormous!

Scheffé’s Method and Tukey’s HSD

These are methods for follow-ups (post-hoc tests) to an ANOVA. If the ANOVA yields a significant p-value (meaning at least one group is different from the others) then you run Tukey or Scheffé to find out exactly which groups are driving that difference. You aren’t limited to simple pairwise comparisons (e.g., Group A vs. Group B). You can compare the average of Group A and B against Group C. It handles unequal sample sizes between groups perfectly well. These approaches are tailored to group comparisons and they are not “universal adjusters” of the level of significance.

Multiple testing over time of the same outcome

While Holm-Bonferroni and Benjamini-Hochberg are your go-to methods for testing dozens of variables simultaneously at the end of a study, the O’Brien-Fleming boundary or the Pocock Boundary are methods for testing a single primary variable at different points in time while a study is still ongoing. The typical example is interim analysis of intervention studies. Interime analysis creates the exact same Family-Wise Error Rate (FWER) problem we discussed earlier. If you test your data at a 0.05 level of significance at Year 1, Year 2, Year 3, Year 4, and Year 5, your overall chance of a false positive inflates to around 14%. You are essentially “fishing” for a significant result by checking the data repeatedly. To keep the overall error rate at 5%, you have to “spend” your alpha (your 0.05 significance level) carefully across those different looks.

The Pocock Boundary lowers the level of significance and keep it constant during the study. The same level of significance is applied at each interime analysis. For example if data is analysed four times at 25%, 50%, 75% and 100% of observations collected the level of significance is set to 0.015.

The O’Brien-Fleming method is an alpha-spending function. It dictates exactly what your p-value threshold must be at each interim look. Its defining characteristic is that it makes it incredibly difficult to stop a trial early, but it leaves the final threshold almost completely unchanged. If you plan 4 looks at the data (3 interim analyses and 1 final analysis), the O’Brien-Fleming p-value boundaries look roughly like this:

- Look 1 (25% of data): p-value must be ≤ 0.00001 to stop early.

- Look 2 (50% of data): p-value must be ≤ 0.0013 to stop early.

- Look 3 (75% of data): p-value must be ≤ 0.012 to stop early.

- Final Look (100% of data): p-value must be ≤ 0.043 for final significance. (Notice how the final p-value is 0.043, which is very close to the standard 0.05!)

The O’Brien-Fleming method preserves the final p-value. Because you “spend” very little of your alpha during the early looks, your threshold at the end of the trial is still very close to 0.05. Furthermore, The O’Brien-Fleming method prevents premature stopping. Early in a trial, data can be highly volatile. A few lucky successes can make a drug look like a miracle cure. The massive hurdle at Look 1 (e.g., p ≤ 0.00001) ensures you only stop the trial early if the evidence is absolutely overwhelming. The O’Brien-Fleming boundary should be your preferred go-to method for testing a single primary variable at different points in time while a study is still ongoing.

In what context should I adjust the level of significance?

The level of significance needs to be lowered if you present multiple p-values. Do I need to consider all p-values presented in a single table, all p-values presented in one manuscript or all p-values I have ever calculated in my life? If the latter was correct it would mean that all experienced statisticians would be out of work because of their need for hefty adjustments of the level of significance.

It would be reasonable to adjust for all p-values in a manuscript considered to be a result. P-values are sometimes calculated in correlations or simple regressions purely as a sorting mechanism to decide which variables to include in a final multivariable regression and these preliminary p-values should not be considered as a result. A reasonable suggestion might be to adjust the level of significance only for primary outcomes, a view presented by Steve Grambow in this video:

Confidence intervals

Within good scientific practice (and in many high-impact journals), it is often considered insufficient to merely report p-values. Reviewers and readers want to know the magnitude of the difference (the effect size), which is why confidence intervals are reported. Often, unadjusted confidence intervals are reported even when multiple intervals are calculated. However, if a journal requires you to adjust your confidence intervals when computing several of them, this is where the classic Bonferroni method (which is a single-step procedure) shines.

You can extremely easily create simultaneous confidence intervals for all your comparisons using the Bonferroni method. If you perform 5 tests with a desired margin of error of 5% (α = 0.05), you simply divide this by 5. Your new alpha for each interval becomes 0.01. You then simply calculate a 99% confidence interval (1 – 0.01) for each individual test.

Holm-Bonferroni is a step-down procedure. It ranks your p-values and dynamically adjusts the thresholds depending on how many tests have already passed. This works excellently for p-values, but it is mathematically extremely complicated (and often impossible in standard software) to translate these dynamic thresholds into adjusted confidence intervals. If you try to construct confidence intervals based on Holm-Bonferroni’s stepwise decisions, you will often end up with intervals that contradict your test decisions (for example, a test might be declared significant, but its confidence interval still overlaps zero).